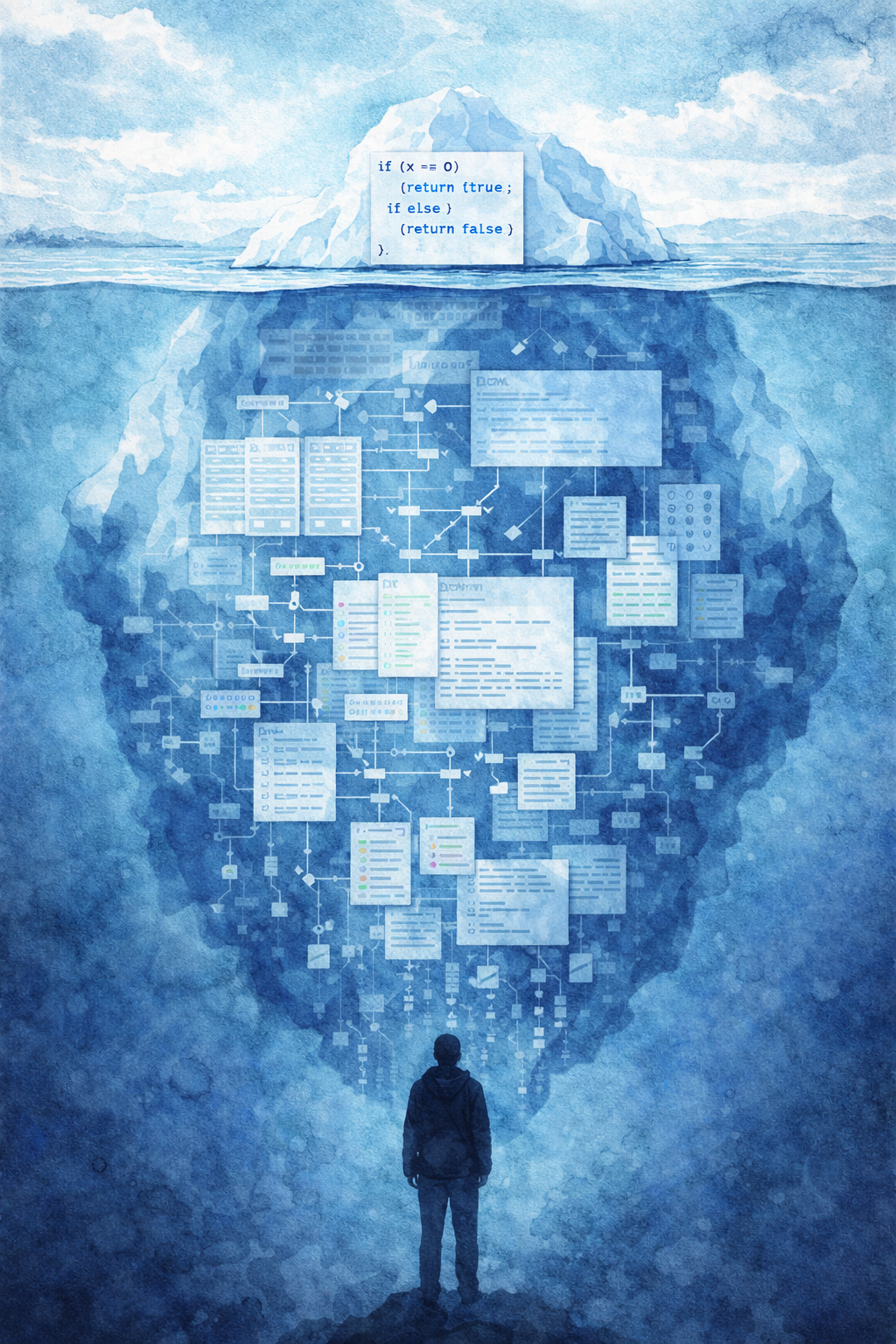

The bottleneck moved: we no longer pay to write software, we pay to understand it well enough to trust it.

Something is shifting in how software gets built, and most of the conversation misses what matters.

A developer rebuilt a backend overnight, then cleaned up 175 TypeScript errors across 33 files by running 18 agents in parallel. (DEV Community) Inside Anthropic, leaders describe workflows where models produce effectively all the code, while humans shift into prompting, coordination, and deciding what to build. (IT Pro)

Many people are circling this from different angles. This post is my attempt to reason from first principles about what happens next, and to name the structures I think are emerging:

1. The Rehydration Law

2. The Forkability Trilemma

3. The Intent-Compilation Theorem

Together they point to the same conclusion:

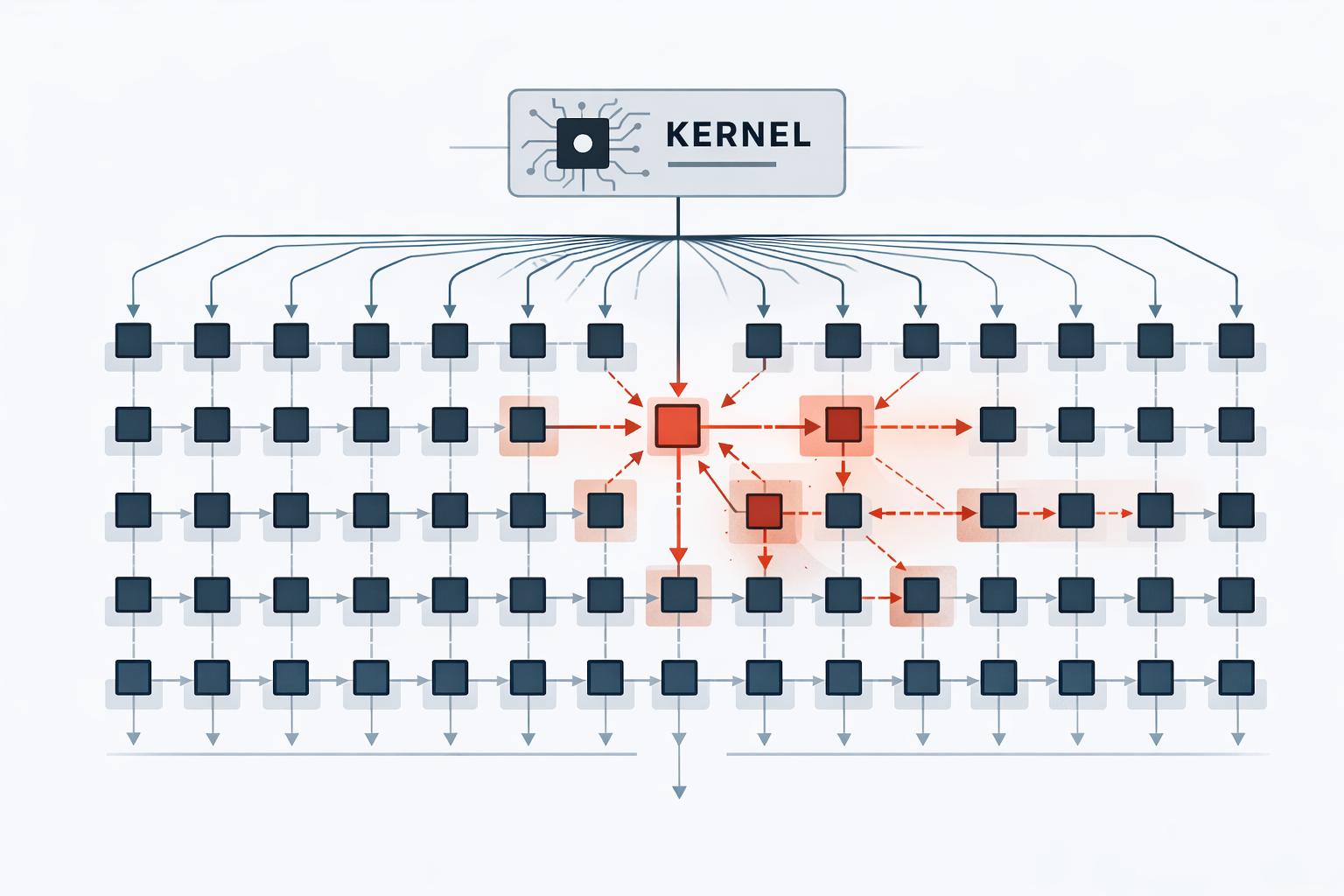

At some point, teams will stop building universal platforms with infinite knobs and say: fuck it, fork it—make it small enough to understand.

- The shift: Generation is cheap. Comprehension and assurance are expensive.

- The Rehydration Law: As systems grow, understanding dominates change cost.

- The Forkability Trilemma: Shared upstream, high variability, low understanding cost. Pick two.

- The Intent-Compilation Theorem: The source of truth shifts from code to intent and constraints. Code becomes a generated artifact.

- The flip: When understanding a system costs more than regenerating it, the obvious choice is to regenerate rather than keep maintaining it.

- The practical move: Build small kernels. Invest in industrial verification. Plan for fleet patching.

What I am adding: the formalization. A cost model with a concrete decision boundary, a trilemma that constrains platform strategy, and an operational layer (ForkOps) for patching and governing specialization fleets. None of these observations are new on their own. Wiring them together from first principles into a single argument is my contribution with this post.

Bugs accelerate the shift

The obvious objection: "LLMs write buggy code. If we let them rewrite systems, we will drown in defects and vulnerabilities." That is true. And it is exactly why the shift happens.

Here is the state of play in 2026:

- 72% of devs who have tried AI coding tools now use them daily. But 96% do not fully trust the output, and only 48% always verify before committing. (SonarSource)

- Some teams are adopting a "vibe, then verify" workflow. SonarSource reports that reviewing AI-generated code can take more effort than reviewing human code, creating a "verification debt" problem. (SonarSource)

- The most dangerous failure mode is not obvious breakage. It is the illusion of correctness: code that looks polished, reads cleanly, and still hides vulnerabilities. (IT Pro)

- Veracode, which tested 100+ models across languages, frames it plainly: AI code can ship vulnerabilities at scale if you treat generation as the finish line. (Veracode)

If your mental model is "AI will get perfect and then we can trust it," you are waiting for the wrong thing.

When code becomes cheap to generate but expensive to trust, architecture shifts to minimize what must be trusted and maximize what can be verified.

My hard assumptions (so you know what I am betting on)

The conclusions depend on these assumptions, so I might as well state them upfront:

1. Generation gets cheaper faster than understanding gets cheaper. Tooling is making "get working code" very fast, but "be confident it is correct and secure" is lagging.

2. Verification becomes the main work, not a nice-to-have. Teams are already moving toward risk-ranking where LLM code is used and investing in verification infrastructure.

3. The number of variants explodes. If you can build custom apps in days, you will. That means more per-customer workflows, per-team tooling, per-context specializations.

4. Security incidents punish "too big to understand." If the system cannot be audited, it cannot be trusted. That becomes a board-level constraint, not a code-style preference.

If you grant these assumptions, the architecture shift is not a vibe. It is economics.

1) The Rehydration Law

Rehydration is the cost of reconstructing a correct working model of a system before you can safely modify it. (Not the frontend rendering kind. The "how does this thing actually work and what will break if I touch it" kind.)

Not "read the code." Rehydrate the meaning: which invariants are real, where the security boundaries live, how configs and flags interact with runtime behavior, what is safe to touch without breaking production, and what will silently fail if you get it wrong.

Every developer knows this cost. Onboarding takes weeks because of it. "Just a small fix" turns into a three-day archaeology expedition because of it. The person who wrote the system is often the only one who can change it safely.

In large organizations, implementation has already been cheaper than comprehension for years. AI did not create this asymmetry. But it is certainly amplifying it.

The Rehydration Law

As systems grow, the dominant cost shifts from producing code to rehydrating the understanding needed to change it safely.

When AI drops generation cost below rehydration cost, the rational architecture is "minimal kernel + compiled specialization," not "giant platform + endless configuration."

A toy inequality that captures it:

- G = cost to generate/modify code

- R = cost to rehydrate enough understanding to change safely

- V = cost to verify (tests, static analysis, security gates, audits)

The flip threshold:

If (G + V) < R, you regenerate/specialize instead of maintaining generality.

As a decision rule: if a change requires touching many modules and interpreting layers of configuration, prefer regeneration from spec plus verification. If a change is localized inside the trusted kernel, prefer direct maintenance with heavy review.

A simple proxy: the Rehydration Ratio (RR) = time spent understanding / time spent changing. When RR is consistently above 1, you are paying more to comprehend than to build. That is when regeneration starts to win. In manufacturing, once inspection time exceeds assembly time, you redesign the line. The RR is the software equivalent.

We already pay rehydration tax in tokens: bigger context windows and agent loops are just ways of buying comprehension. They scale with system complexity, not with the change you want to make. That is R.

And notice what the bug reality does: it pushes V up, which is scary. But it pushes R up even more for giant systems. Bugs do not kill this shift. Bugs accelerate the demand for architectures with smaller trusted surfaces.

The rational response is not "stop using AI." It is "structure systems so R collapses." And the fastest way to collapse R is to make the system smaller and more explicit.

This architecture rhymes with an old one. Exokernels and library operating systems made the same bet in the 1990s: a minimal kernel that multiplexes resources, with application-specific libraries providing the abstractions. We had this debate in OS design. Now we are having it again, with LLMs and agents as the new forcing function.

Failure mode: verification does not scale

The critical bet is that V does not explode faster than R comes down. Distributed boundaries, emergent behavior, cross-service invariants, adversarial security surfaces. These can all push V up hard. If verification remains partially manual, the inequality never flips at scale, and the industry doubles down on stricter shared platforms instead. The entire thesis rests on verification tooling maturing fast enough to keep V manageable. That is not guaranteed. The Rehydration Law breaks when the domain is so coupled that specialization increases V faster than it reduces R. Distributed systems with emergent cross-service invariants are the canonical example. That said, verification tooling is advancing faster than most people expect. I personally would not bet against it.

2) The Forkability Trilemma

Every org wants these three things at once:

1. One shared upstream (single codebase, centralized updates)

2. High variability (many integrations, custom workflows, per-customer behavior)

3. Low rehydration cost (small, auditable, comprehensible surface)

The Forkability Trilemma

You can optimize for any two of:

- one shared upstream

- high variability

- low rehydration cost

You do not get all three at scale.

Upstream + variability gives you the classic modern platform: flags, plugin registries, config DSLs, "support everything." It becomes a combinatorial machine where behavior lives in the cross-product of code and configuration. Rehydration becomes permanent tax.

Variability + low rehydration cost gives you specializations: purpose-built deployments that stay small enough to reason about.

Upstream + low rehydration cost means you cap scope. You ship a narrow product, very well, and you say "no" to every customer request.

How to tell which corner you are in:

- Cross-product of flags and configuration determines behavior → upstream + variability

- Many bespoke deployments with schema drift and patch anxiety → variability + low rehydration

- Narrow product, frequent "no," small surface area → upstream + low rehydration

AI makes variability cheap, which pulls you toward the "specializations" corner unless you intentionally resist it. And buggy AI code makes the "un-auditable universal platform" strategy less tolerable, because it raises the stakes on comprehension and verification.

One tension worth naming: forking reduces configuration complexity but increases fleet complexity. You trade a combinatorial config surface for schema migrations per fork, integration version skew, and regulatory constraints per deployment. The trilemma does not go away. It changes shape.

And standardization does not disappear. It just converges on specific layers, not the full stack: kernel interfaces, policy formats, verification protocols, regulatory compliance artifacts. The same way TCP/IP standardized the protocol without standardizing the applications. Or containers standardized the deployment unit without standardizing the code inside. Implementation fragments. Constraints converge.

A clarification on what I mean by "forking": the architecture I am arguing for is not "literally Git-fork the repo 5,000 times." It is fork the intent, not the implementation. You maintain a small kernel and a set of intent bundles (specs, constraints, policies, tests, procedures), and each deployment gets a specialized artifact compiled from its intent bundle plus the shared kernel.

The obvious objection: "Great, now how do you patch 5,000 specializations?" You patch the kernel, regenerate artifacts, re-run gates. It becomes a compilation problem, not a heroics problem. I will come back to this.

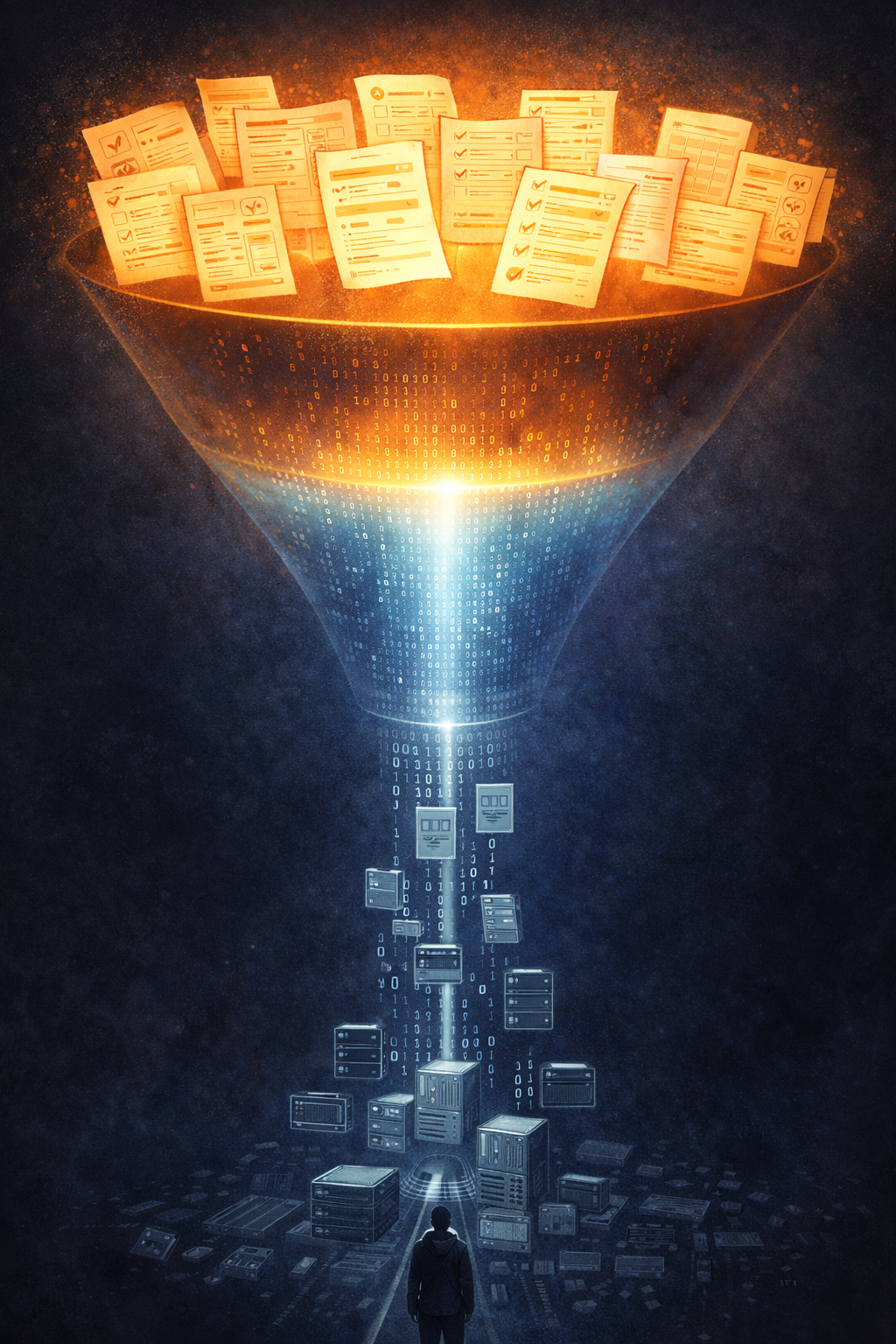

3) The Intent-Compilation Theorem

People love to say "we will program in English." That is not the real point, and it is usually a trap.

The real shift is about what becomes the source of truth.

There is a pattern here. Software has moved up abstraction layers for decades:

Assembly. C. Managed runtimes. Frameworks. Declarative infrastructure. Policy-as-code.

Each step moved the source of truth upward. Charles Simonyi saw this in 1995 when he proposed Intentional Programming: intent as the primary artifact, code as generated output. The idea is not new. What changed is that cheap generation and mechanized verification now make the economics work at scale.

The Intent-Compilation Theorem

When code generation is cheap and verification is mechanized, the source of truth moves up the stack: from implementation to intent + constraints.

Code becomes a compiled artifact: useful, but replaceable.

The intent layer is not "a prompt." It is:

- specs and acceptance tests

- invariant checks

- policies: "never exfiltrate secrets," "no shell access," "PII redaction required"

- capability constraints and sandbox rules

- provenance and rollback rules

- procedural skills that describe how to do common tasks safely

I have no idea what exact shape this will take, but here is a rough sketch of what an intent bundle might look like:

intent_bundle:

invariants:

- "payment.amount_cents > 0"

- "refund.total <= payment.amount"

security:

- "no_network_egress_except: [payments_api, audit_log]"

- "pii_redacted_in_logs: true"

acceptance_tests:

- "refunds_are_idempotent"

- "webhooks_are_verified"

threat_model:

- "assume_prompt_injection: true"

- "assume_dependency_compromise: possible"This is not a prompt. It is a build manifest plus a safety case. The source-of-truth test: when requirements change, what do you edit first? If the answer is code, you are still in the old world. If the answer is the intent bundle, you are in the new one.

If you want to picture what this looks like in a repo:

/kernel/ # policy enforcement, sandbox boundaries, stable primitives

/intent/ # specs, invariants, acceptance tests, threat model, data contracts

/compiler/ # transformations, generators, guardrails

/verification/ # static analysis, property tests, fuzzing, SBOM, provenance

/artifacts/ # build outputs — signed, reproducible, regenerableTwo things are sacred: the kernel and the verification harness. Everything else is regenerable.

We stopped writing assembly not because compilers were perfect, but because they were good enough and we built tooling around them: debuggers, profilers, test harnesses. The same thing is happening now. We will stop treating codebases as sacred texts not because LLMs become gods, but because we will build the equivalent tooling: verifiers, sandboxes, provenance, and rebuildable artifacts.

If we would not hand-edit a compiler's output, why are we still building systems where we must hand-edit the output forever?

Boundary conditions

Each concept has a failure mode worth naming:

- Rehydration Law: breaks when the domain is so tightly coupled that splitting it apart creates more verification burden than it saves in comprehension.

- Forkability Trilemma: softens when variability is shallow and isolates behind stable interfaces. If customization fits behind an API boundary, upstream + variability stays tractable. The trilemma bites when variability touches invariants, security boundaries, or data models.

- Intent-Compilation: breaks when the intent layer itself becomes a sprawling pseudo-language with weak test coverage. Then you have just moved the mess up a layer. Intent bundles must stay smaller and more auditable than the code they replace, or you have gained nothing.

"Okay, but is software engineering dead then?"

None of this means engineers become obsolete.

"Typing code" is dying. Engineering is not.

The value moves upstream into:

- turning messy reality into crisp specs and invariants

- designing verification harnesses

- defining policy boundaries and permissions

- threat modeling AI-assisted changes

- choosing what to build and what to not build

- running systems of record in production

The future software engineer is part compiler-writer, part test engineer, part product strategist, part security engineer.

What disappears is not work. What disappears is the illusion that implementation is the core of the job.

"And SaaS is dead too, right?"

You hear this one a lot. It is wrong, but there is a kernel of truth in it.

A lot of SaaS companies are basically: a workflow UI, a permissions model, a data store, and integrations. Agents can eat the UI layer first. So "SaaS is dead" is wrong, but "SaaS as UI" is in real trouble.

The moat shifts. The defensible parts become:

- data gravity and data quality

- deep integration and enterprise controls

- governance, compliance, audit trails

- reliability and trust

- distribution and switching costs

SaaS does not die. It re-bundles. And the Forkability Trilemma explains how. If customers can get bespoke workflows cheaply, the pressure rises to abandon one-size-fits-all products with giant config sprawl.

The market splits into:

1. Systems of record (data + compliance + reliability)

2. Intent compilers (tools that turn policy/specs into systems/actions)

3. Fork fleet management (governance, patch propagation, provenance, reproducibility)

4. Verification infrastructure (the "vibe, then verify" pipeline made industrial)

If you are building SaaS today, the question becomes: are you selling a UI, or are you selling a trusted system of record and governance substrate that agents cannot bypass? Pricing follows accordingly: seat-based models weaken when the "user" is an agent, and value shifts toward verified outcomes: transactions processed, workflows executed, errors prevented.

"What about the solo builder?"

There is a limit case worth naming: the indie dev who ships a SaaS product alone. The vibe-coding generation are the purest embodiment of cheap generation. One person, an LLM, and a weekend can now produce what used to take a team months. They move fast, they market well, and they do not need to ask anyone for permission.

But they are not verification people. Most solo builders ship with minimal tests, no formal threat model, and whatever security the framework gives them for free. That works while the product is small, because R is low. A single person can hold the whole system in their head. The Rehydration Law does not bite yet.

The problem arrives with success, though how fast depends on what you are building. An AI photo editor can stay simple for years. A product that touches payments, personal data, third-party APIs, privacy regulations, or multi-tenant state gets there much sooner. Either way, users grow, features accumulate, integrations multiply, and eventually the solo builder is maintaining a system too large to rehydrate alone. They hit the same wall that enterprises hit, just with fewer resources. The difference is that an enterprise can hire a security team. A solo dev probably cannot.

Two outcomes seem likely. Either verification tooling gets cheap and automated enough that a solo dev can run industrial-grade gates without thinking about it (this is the optimistic path, and it is where a lot of the tooling investment is headed). Or solo products that handle real money, real data, or real liability get squeezed out by the trust gap. The market will forgive a buggy side project. It will not forgive a payment processor that leaks credentials because nobody ran a security audit.

The indie golden age is real. But it runs on the same inequality as everything else: (G + V) < R. As long as V stays near zero because the product is small and low-stakes, it works. The moment stakes rise, so does V, and the solo builder has to decide whether to invest in verification or stay small.

The missing piece: how you patch 5,000 forks

If specializations win, here is the screaming objection:

"Fine. Now I have 5,000 specialized deployments. How do I ship a security fix?"

That is not a reason the fork world fails. It is the reason an entire discipline is born: ForkOps.

ForkOps needs four things that do not exist yet as standard infrastructure:

The Intent Bundle Manifest. A versioned, signed artifact that declares: invariants, policies, test suite pointers, data contracts, threat model, and provenance. Each specialization carries one. It is the unit of governance.

The Patch Lift protocol. When a kernel vulnerability is found: patch the kernel, compute a rebuild plan across the fleet, generate proof obligations per specialization, re-run verification gates, and roll out only what passes. This is not "merge commits across 5,000 repos." It is "recompile and re-prove."

The Proof Obligation Set. The machine-generated list of invariants, policies, and tests that must be re-proven for a given specialization after a kernel change. This is Patch Lift's internal unit of work.

The Fleet Attestation Ledger. A queryable record of which specializations are rebuilt, proven, and deployed against which kernel and verifier versions. CI/CD became real when builds and deploys became inspectable objects. ForkOps becomes real when "rebuild-and-prove" does too.

In the kernel + compiler model, patch propagation becomes mechanical:

- patch the kernel

- patch the verifier

- regenerate artifacts per intent bundle

- re-run gates that prove invariants still hold

- roll out with attestation

This is exactly where "verification debt" turns from a metaphor into a line item. (SonarSource)

The orgs that win will treat this like CI/CD treated deployments: not heroics, but a pipeline.

Fork-first without verification and patch propagation is reckless. Fork-first with industrial verification is what comes next.

What happens next

If I play this forward with my hard assumptions, it is possible, maybe probable, that not everything gets forked. I would envision the outcome as a split.

Regulated cores survive. Massive shared systems of record with strict APIs, strong compliance, and data gravity. These get more valuable, not less, because agents cannot route around reality. Enterprises will likely keep boring central platforms where liability and audit requirements demand it, but even these will face constant pressure to re-evaluate as verification tooling improves and the economics shift.

Generated edges proliferate. Per-customer workflow modules, internal tools, integration glue, low-liability services. These get forked aggressively. Minimal kernels, intent bundles, compiled specializations, heavy automated verification.

The two patterns coexist. The regulated core handles state, compliance, and trust. The generated edge handles velocity, customization, and experimentation. The boundary between them becomes the most important architectural decision in the stack.

The fork-first model dominates where verification tooling matures and liability is low. Shared platforms survive where data gravity, compliance, and standardization reward central control.

But somewhere on the generated edge, a cultural moment hits. A team stares at their platform, its thousand flags and config files, and decides they are done. Just like we stopped hand-writing assembly because compilers got good enough, they will stop pretending they can scale complexity with abstraction layers. And they will say:

"Fuck it. Make it minimal. Fork it. Ship something we can actually understand."

That will not be the whole industry. But it will be enough of it to matter. And if the economics keep trending this way, orgs that specialize early will outpace the ones still maintaining monoliths.

Some predictions I am willing to be wrong about:

- Within 18 months, many orgs will treat tests and policies as primary artifacts and routinely regenerate large portions of implementation.

- "Config sprawl" will be reframed as an audit liability, not a flexibility advantage.

- A new class of tools will emerge around variant fleet patching, provenance, and reproducible regeneration.

- Code review will shift from reading diffs to validating invariants, capability boundaries, and supply-chain provenance.

If you build systems: what to do this quarter

Five things you can do now:

1. Measure rehydration cost so you can see where complexity taxes you. Track time-to-first-safe-change, files read vs. files modified, and verification cycles per change. If you cannot measure it, you cannot manage it.

2. Shrink the trusted kernel so audits and incidents have a bounded blast radius. Keep policy enforcement, sandboxing, provenance, and verification stable. Everything else becomes regenerable.

3. Move variability to build time so behavior is explicit and testable. Replace runtime config sprawl with build-time specialization where possible.

4. Invest in verifiers so generation speed does not become incident speed. Tests, invariants, static analysis, SBOMs, reproducible builds, and policy gates are what make regeneration trustworthy.

5. Plan for patch propagation so specialization does not create security paralysis. Fork-first without a patch strategy is malpractice.

Prior art

The raw observations have precursors: "code is cheap, trust is expensive" (Willison, Nadh, Gregori, Vogels); comprehension dominating maintenance cost (von Mayrhauser & Vans, 1995); intent-as-source-of-truth (Simonyi, 1995); variability trade-offs (Software Product Line Engineering); minimal kernels (exokernels); and patch propagation across forks (Machiry et al., 2020; Pan et al., 2024). This post's contribution is the synthesis into an engineering model: (G + V) < R, RR, the Forkability Trilemma, intent bundles, and ForkOps (manifests, Proof Obligation Sets, Patch Lift, Fleet Attestation Ledger).

Sources

- SonarSource: State of Code Developer Survey (72% daily use, 96% trust gap, 48% always verify)

- SonarSource: Verification Gap in AI Coding ("vibe, then verify," verification debt)

- IT Pro: AI-generated code and the "illusion of correctness"

- Veracode: GenAI Code Security Report

- DEV Community: Rebuilding a backend overnight with Claude Code

- IT Pro: Cherny and Amodei on AI and software engineering

- Simon Willison: Code is cheap

- Kailash Nadh: Code is cheap

- Chris Gregori: Code is cheap now. Software is not

- Simonyi (1995): The Death of Computer Languages, the Birth of Intentional Programming

- von Mayrhauser & Vans (1995): Program Comprehension During Software Maintenance and Evolution

- CMU: Software Product Line Engineering

- Machiry et al. (2020): SPIDER: Enabling Fast Patch Propagation in Related Software Repositories

- Pan et al. (2024): Automating Zero-Shot Patch Porting for Hard Forks

- Engler et al. (1995): Exokernel: An Operating System Architecture for Application-Level Resource Management

- Vogels (re:Invent 2025): Verification debt and the AI coding gap

Post edit: If you want to know what happens when this plays out — the variant fleets, the patch economics, the winter. I wrote that story too: The Fork Winter (2029).